You know what? I feel like we don’t talk enough about the structure of metadata. We’ve been talking a fair bit in recent years about offensive subject headings, inappropriately-used call numbers, for and against demographic details in name authority records. But my time as a systems librarian has reignited my deep interest in library data structures. Learning to write SQL queries and structure data in my head like our ILS does (that is, idiosyncratically) has meant I now spend most of my work days staring at spreadsheets. And I’ve started to wonder about some things.

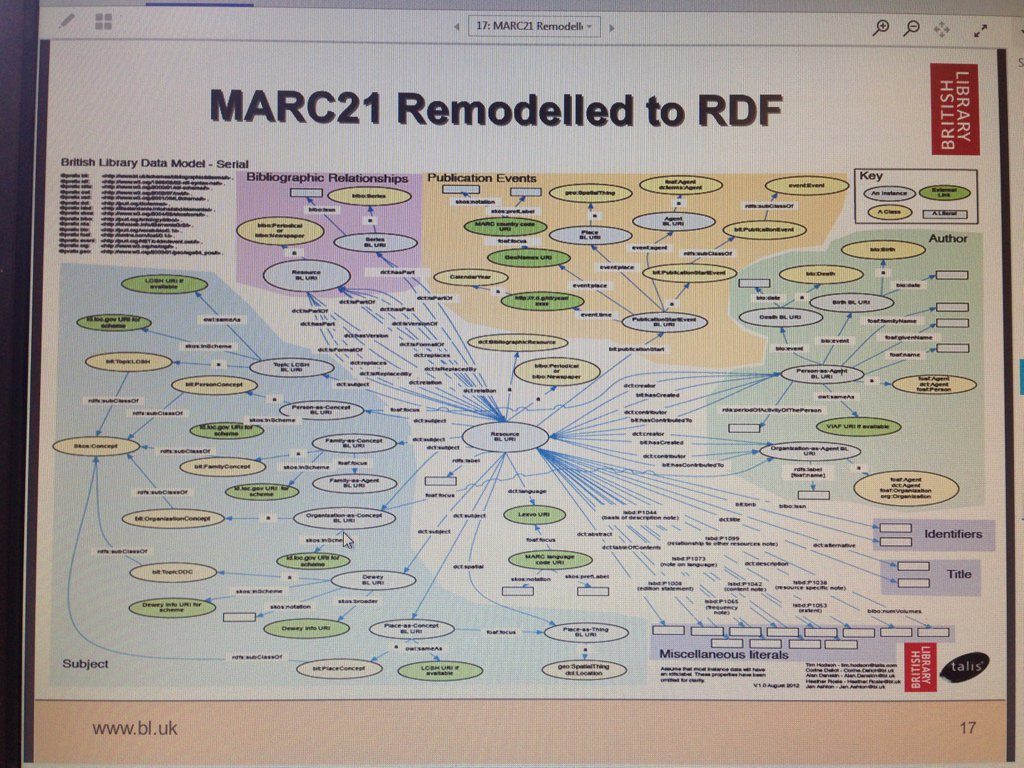

How do our data structures and systems shape our data? How do MARC principles, informed by Western ways of knowing and speaking, influence our understanding and description of the books we catalogue? What biases and perspectives do our structures encode, perpetuate and privilege?

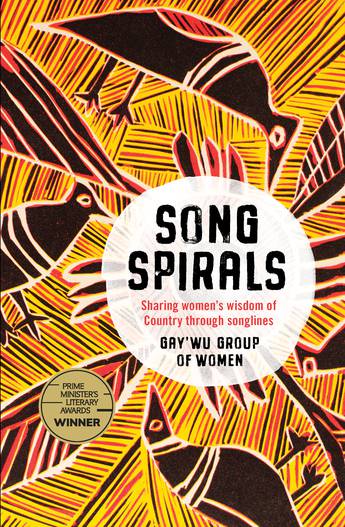

Consider the book Songspirals: sharing women’s wisdom of Country through songlines, joint-winner of the 2020 Prime Minister’s Literary Awards non-fiction category. The book is credited to the Gay’wu Group of Women, a collective of four sisters and their daughter from Yolŋu country in north-west Arnhem Land, Northern Territory, and three ŋäpaki (non-Indigenous) academics with whom they have collaborated for many years. The book is told in the sisters’ voice, sharing women’s deep cultural knowledge and wisdom through five ‘songspirals’. Settler Australia might know these as ‘songlines’, a term popularised by Bruce Chatwin in his 1987 book The Songlines, but as the Gay’wu Group writes,

In this book we call them songspirals as they spiral out and spiral in, they go up and down, round and round, forever. They are a line within a cycle. They are infinite. They spiral, connecting and remaking. They twist and turn, they move and loop. This is like all our songs. Our songs are not a straight line. They do not move in one direction thorugh time and space. They are a map we follow through Country as they connect to other clans. Everything is connected, layered wth beauty. Each time we sing our songspirals we learn more, do deeper, spiral in and spiral out. (p. xvi)

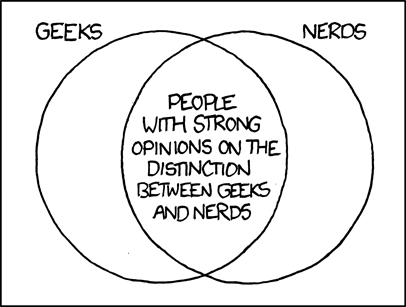

A collective author like the Gay’wu Group of Women sits uncomfortably within Western resource description paradigms. Does RDA consider them a person, family or corporate body? Would MARC enter them as a personal name (100) or a corporate name (110)? Why are these my only options? Why are groups of people considered to be ‘corporate bodies’? Why are ‘families’ limited to those who share a surname?

I borrowed Songspirals from my local library a few weeks before I delightfully received a copy as a birthday present. Their new, shiny catalogue distinguishes one author from the rest, mirroring the main-versus-added-entry choice MARC forces us to make, but I was puzzled to find their choice of main author was one of the seven, Laklak Burarrwaŋa. Her name was misspelt as ‘Burarrwana, Laklak’, missing the letter eng ŋ, which represents a ‘ng’ sound in Yolŋu Matha. (This letter is not commonly found on a standard keyboard, and so Laklak’s surname could also be acceptably spelt ‘Burarrwanga’.) Did this record originate in a system incapable of supporting quote-unquote ‘special characters’? Was it originally encoded in MARC-8, which appears not to support the letter ŋ? Did a cataloguer misread the letter? Or could they simply not be bothered?

It’s a questionable choice of main entry, as the Gay’wu Group of Women are prominently credited on the cover, spine and title page as the book’s author. But knowing the history of cataloguing as I do, I suspect I know why this choice was made: an old rule from AACR2 preferred personal names over corporate names for main entries, as explained in this heirloom cataloguing manual from 2003. Corporate bodies were only treated as main entries in limited circumstances, largely relating to administrative materials, legal, governmental and religious works, conference proceedings (where a 111 conference main entry was not appropriate) and for works ‘that record the collective thought of the body (e.g. reports of commissions, committees, etc.; official statements of position on external policies)’. It’s a fascinating and bizarre set of proscriptions. At no point does the manual explain the logic or context behind these rules. They are simply The Rules, to be broken or ignored at the cataloguer’s peril.

I never learned AACR2 so this convention has never made much sense to me. But I can easily imagine an elder cataloguer examining this book and going ‘hmm, the Gay’wu Group of Women don’t fit into any of the corporate body main entry rules that AACR2 burned into my brain, the members are listed individually by name on the title page, I’ll pick the first name as the main entry’ and entering Laklak’s name almost by rote. I don’t think any Australian libraries still use AACR2, but old habits die hard. It’s ridiculous that we still have to think about these things. Don’t cataloguers have better things to do than contort our data into antique data structures?

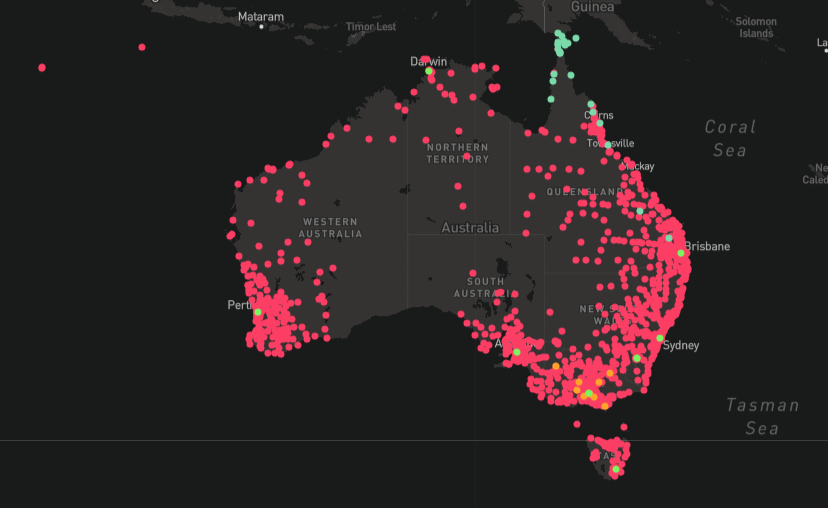

The DDC call number, 305.89915, is a catch-all for ‘books on Indigenous Australian societies’ irrespective of topic. All kinds of books end up here: Singing Bones on ethnomusicology in Arnhem Land, Sand Talk on philosophy across the continent, Surviving New England on the Anaiwan genocide. I suppose I should be grateful Songspirals wasn’t classed in 398.2049915, the number for Aboriginal Australian ‘mythology and fairy tales’. But this book is truly interdisciplinary, transcending Dewey’s rigid classes of knowledge and encompassing all corners of the Yolŋu lifeworld. In DDC logic, it makes sense that this book would be classified in an interdisciplinary place. I guess I’m just tired of seeing 305.89915 used so indiscriminately. It doesn’t help that the -9915 suffix encompasses the entirety of Indigenous Australia, with no further enumeration of specific nations or groups.

What if… we stopped choosing only one number? What if we routinely classed multiple copies of books in multiple places? A copy in history, another in the social sciences, a third in music? What if those weren’t even the categories? What if our classification system were a spiral, with multiple lines of intellectual inquiry reaching out from a core of knowledge, instead of our current linear system of ascending numbers? I wonder where the Galiwin’ku Library would place this book, having replaced their simplified DDC with a more culturally intuitive arrangement. Perhaps we should follow their lead.

The item part of the spine label, ‘BURA’, reflects the initial choice of Laklak Burarrwaŋa for main entry. The practice of including the first three or four letters of the main entry, or otherwise constructing a Cutter number, for a spine label theoretically ensures that each physical item in a given library has a unique call number. Meanwhile the ‘CUL’ at the top stands for ‘Culture and society’, part of this library service’s continuing effort to genrefy its collections. Some branches (including my local) are still arranged in Dewey order, while others are grouped more thematically. It’s been this way for years. I kinda like that they haven’t picked one yet. It keeps things interesting.

Disgruntled with my local library’s cataloguing and the state of things in general, I next interrogated the Australian national union catalogue, Libraries Australia, which is now part of Trove. There are seven records for Songspirals in the database, two for the ebook and five for the paper book, collectively held by over 160 libraries across Australia. I’m not surprised to see so many records; Libraries Australia’s match-merge algorithm is notoriously wonky and often merges records incorrectly, which are a massive pain to sort out. I’d rather a dupe record than a bad merge.

The source record used by my local library appears to have been updated since they acquired the book, though the seven LA records can’t quite agree on whether Songspirals is one word or two (the text of the book spells it as one word). Pleasingly, Gay’wu Group of Women are now the main entry in all seven records (as a 110), although one uses the questionably inverted form ‘Women, Gay’wu Group of’. Some records list all eight women’s names in a 245 $c statement of responsibility field, while another lists them in a 500 general note field. Only one record gives each contributor a 700 added entry field, with the surnames painstakingly inverted; we can safely assume none of the contributors had name authority records created.

MARC makes this more complicated than it needs to be. Systems often can’t index names that aren’t in 1XX or 7XX fields. Perhaps name authority records could be automatically created, or close name matches proactively suggested? Could systems embed named-entity recognition, a form of AI, to extract and index names wherever they appear in a record? (One record spells Burarrwaŋa two different ways, neither with the letter ŋ. These kinds of simple errors don’t help.)

Five records misspell the collective authors’ name as ‘GayWu Group of Women’, without the glottal stop and with a capital W, which seemed a curious mistake. I refused to believe that every single cataloguer who touched these records had blithely gotten this wrong, so I wondered if a system had forced this error. Two other records included the glottal stop, confirming LA systems could handle these characters, while a peek in the LA Cataloguing Client showed that this was a data error and not a display error in the LA search interface. While poking around the backend I noticed that all five misspelt records had at some point been through the WorldCat system, evidenced by various OCLC codes in the 040 field. Could this be an OCLC system problem? I don’t know for sure, but it warrants a closer look.

Several cataloguers went to the effort of including AUSTLANG codes in their records for Songspirals. Three records include a code for Yolŋu Matha in the 041 #7 language field, but only one record has a correctly-formed code, N230. The others have ‘NT230’, which is likely an overcorrection from people thinking the ‘N’ stands for ‘Northern Territory’, and is not a valid AUSTLANG code. While this mistake is simple and easily avoidable, it has also now propagated into hundreds of library catalogues. I wonder if more attentive systems could validate such language (041) or geographic (043) codes against controlled lists, including the MARC defaults and AUSTLANG.

It’s worth noting that the library at AIATSIS, the Australian Institute of Aboriginal and Torres Strait Islander Studies, whose research arm maintains the AUSTLANG database, assigned the additional code N141 for the Gumatj language, a dialect of Yolŋu Matha1. I habitually defer to AIATSIS on matters of First Nations resource description: if it’s good enough for them, it’s good enough for me.

Describing books using the Library of Congress Subject Headings (LCSH) is often an exercise in frustration and futility, searching for concepts that don’t exist in the American psyche, but doing justice to the songlines with this vocabulary is flat out impossible. We are reduced to phrases like ‘Folk music, Aboriginal Australian’ and ‘Yolngu (Australian people) — Social life and customs’, flattening the spiral into the linear thinking of the coloniser’s language. From my whitefella cataloguer perspective, the closest LCSH probably gets is the notorious ‘Dreamtime (Aboriginal Australian mythology)’ heading, which as I’ve written before is not appropriate in a contemporary catalogue, and which no LA record appears to have used. But I don’t think this omission is necessarily due to individual cataloguers’ ethics. Songspirals does not name the Dreaming, or discuss it as an academic pursuit. Rather, this book is the Dreaming. It feels a bit daft to say ‘it’s not a subject, it’s a genre’ but even this artificial distinction between what a book ‘is’ and what a book is ‘about’ feels deeply irrelevant to this work.

The English language fails me here. But it’s how I interpret the world. It shapes what I know. And it shapes how I catalogue.

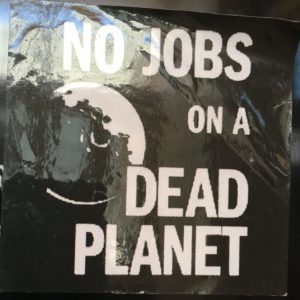

Cataloguers are expected to judge books by their covers, quickly ascertaining the salient facts about a work and categorising it within the boundaries of their library’s chosen schemata. Without having read the book in its entirety, I could tell these records were wonky just by looking at them. But they are structurally wonky. Indigenous knowledge will always sit uncomfortably within Western descriptive practices. It’s more than deciding if something is ‘correct’ or ‘incorrect’. It’s not about yelling at individual cataloguers, although some of these errors were clearly unforced. It’s hard to transcribe names accurately if our systems can’t cope with ‘special characters’ or to represent a work’s collaborative authorship if our data structures insist on privileging one author above all others. It’s difficult to represent the interdisciplinarity of a work if our policies dictate it can only be classified in one spot on a linear shelf. Systems caused these problems. But perhaps systems could help fix them, too.

Our sector chooses the data structures, encoding standards, controlled vocabularies and classification styles that we work within. These choices have consequences. We could make different choices if we wanted to. These things did not fall out of the sky; many people built these structures over many years. But our potential choices are each a product of their time, culture, context and ways of knowing. This doesn’t make them ‘bad’ options, but it does mean they may struggle to describe forms of knowledge so different from their own. Perhaps we could choose a different way.

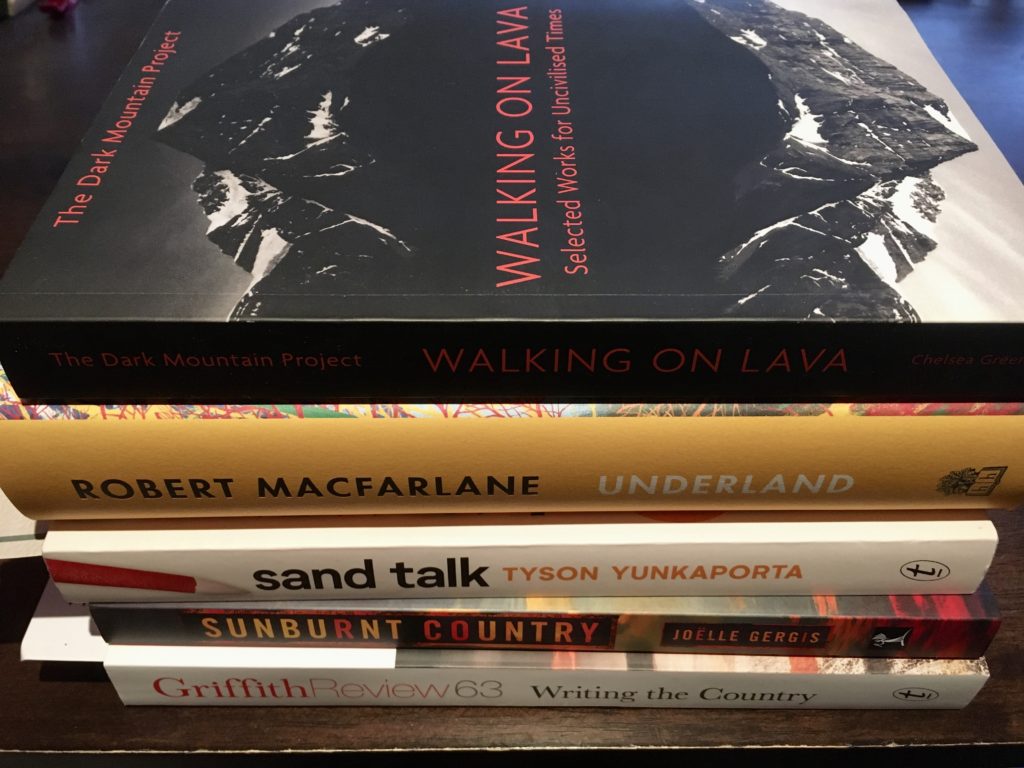

At home, I keep meaning to organise my books properly but never seem to get round to it. I have a shelf of library books (mostly overdue), a shelf of recent acquisitions and a shelf of ‘books that vibe with my thinking of late, and which I ought to read soon’. At various points Songspirals has sat on all three. Perhaps the very notion of fixed metadata is inherently at odds with the cyclical and adaptive nature of songspirals, of oral histories passed down through the generations, layers of wisdom accumulating like layers of the Earth’s crust. Knowledge is always changing and adapting to the world around it. So, too, should our ways of describing that knowledge.

- My initial training in assigning AUSTLANG codes recommended against using codes for languages where their entry in AUSTLANG was capitalised, as in ‘YOLNGU MATHA’. This indicates a language family, rather than a specific language, and further investigation may be needed. Songspirals itself refers to the language in the book as ‘Yolŋu matha’, so understandably cataloguers outside AIATSIS would have followed the book’s lead. ↩